Ergoweb® Learning Center

We’ve published and shared thousands of ergonomics articles and resources since 1993. Search by keyword or browse for topics of interest.

Downloadable Guides

Open Access Articles

February 2, 2022

Article Highlights: Fatigue Failure theory is a new ergonomics theory about how workers develop MSDs Three recently developed ergonomics assessment tools — LiFFT, DUET and The […]

July 16, 2021

Liberty Mutual Insurance Company has conducted numerous studies over several decades that help identify and reduce risk of injury related to manual material tasks like lifting, […]

May 13, 2020

The COVID-19 pandemic has forced many employees to stay home, and others to work reduced schedules. This extended time away from work may result in some […]

May 12, 2020

A step-by-step guide to planning an effective ergonomics process at a company site or facility. This is a brief summary article — for more detail and […]

February 6, 2020

“Designing in” workplace ergonomics is viewed as an integral part of an effective ergonomics process. Non-office workplace environments are constantly changing – and new ergonomics challenges […]

January 28, 2020

Wearable exoskeletons and ergonomics are getting a lot of attention lately. Exoskeletal devices have already shown great promise and success as rehabilitation and disability solutions, and […]

December 16, 2019

Here’s a list of ergonomics standards, guidelines, regulations and compliance resources. It was last updated on January 29, 2020. The list is comprehensive, but we’ve surely […]

November 18, 2019

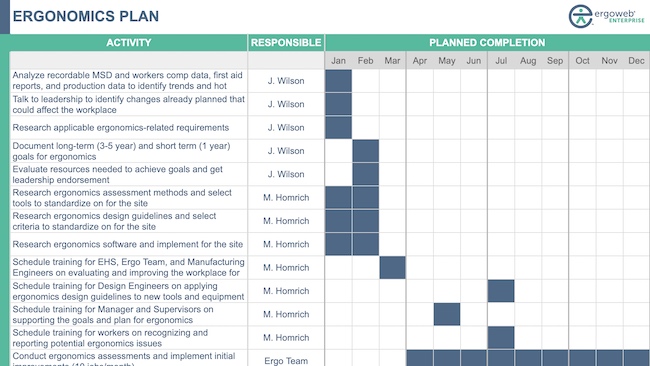

Managing ergonomics at a site requires a lot of planning, coordination, and communication. An effective ergonomics improvement initiative relies on contributions of people throughout the organization […]

November 4, 2019

A well constructed site ergonomics plan is critical for ensuring that everyone involved in the ergonomics process understands what needs to occur, and who is responsible […]